+ Welcome!

mail_outline Email: melody.huang@yale.edu

Twitter: @melodyyhuang

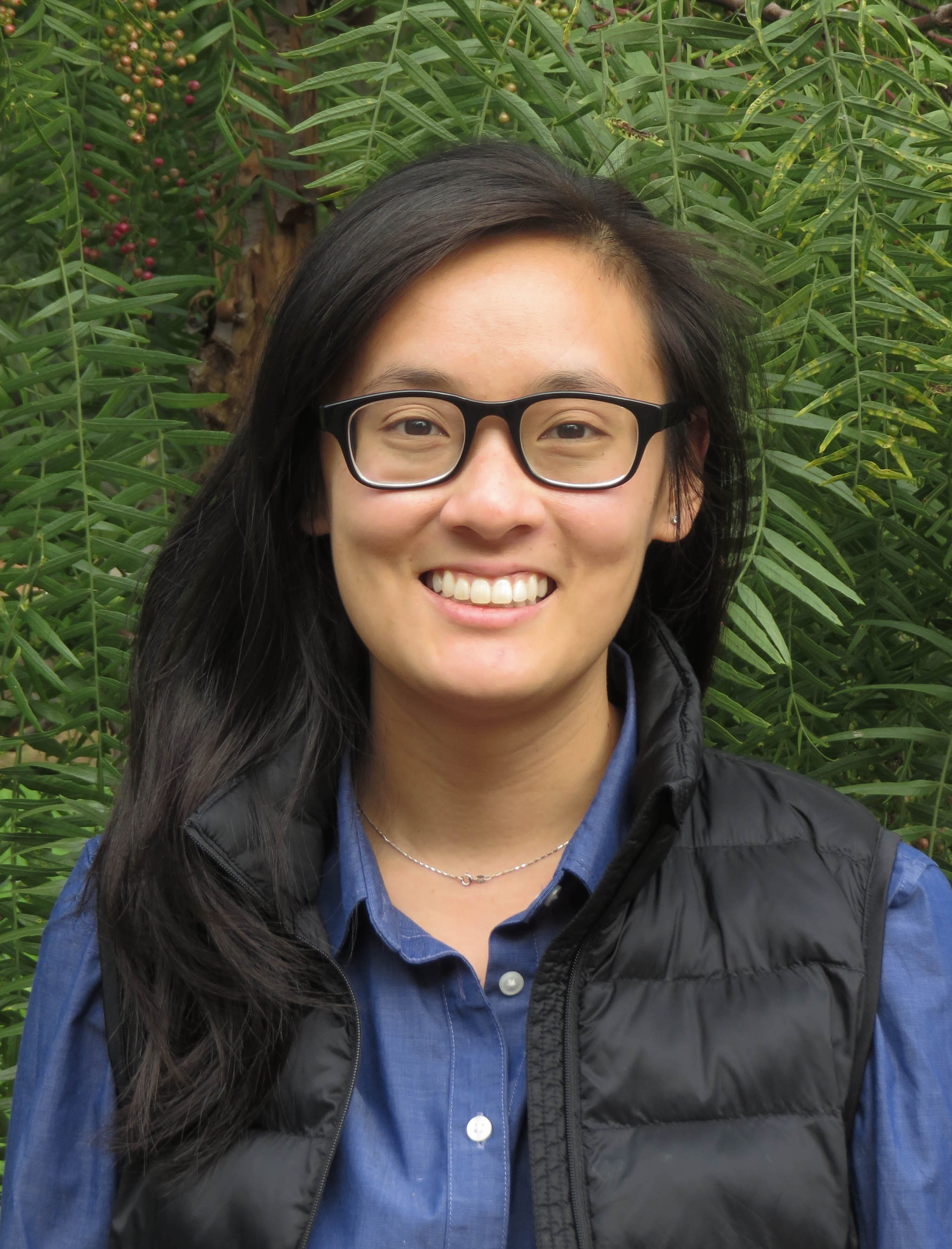

I'm currently an Assistant Professor of Political Science and Statistics & Data Science at Yale. My research broadly focuses on developing robust statistical methods to credibly estimate causal effects under real-world complications.

Before this, I was a Postdoctoral Fellow at Harvard, working with Kosuke Imai. I received my Ph.D. in Statistics at the Unversity of California, Berkeley, where I was fortunate to be advised by Erin Hartman.

Recent News

Loading...